Browser AI: Real Workloads, Zero Cloud

Alps Wang

Mar 24, 2026 · 1 views

Decentralizing Intelligence

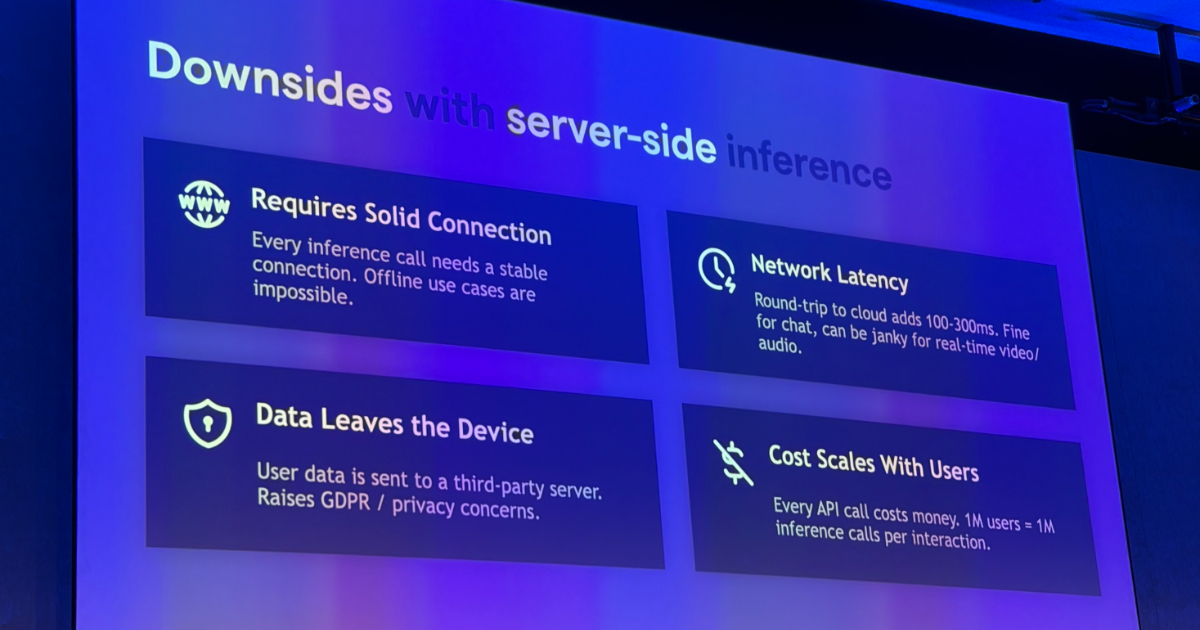

The QCon London presentation by James Hall on running AI workloads directly in the browser represents a pivotal moment in edge AI, moving beyond theoretical possibilities to practical, developer-accessible tools. The emphasis on privacy, reduced latency, and cost efficiency as primary drivers for browser-native inference is compelling. Hall's demonstration of tools like Transformers.js, WebLLM, and the integration with WebGPU highlights a mature ecosystem capable of handling sophisticated tasks, from near-human transcription with Whisper to complex data analytics combining DuckDB and local LLMs. The architectural privacy argument, where data never leaves the user's device by design, is particularly strong in an era of increasing data sensitivity. The practical advice on UI design, model loading, and evaluation, focusing on measurement and validation, provides crucial guidance for developers venturing into this space. This approach not only democratizes AI capabilities but also fundamentally alters the cost structure and user experience for AI-powered applications.

However, the inherent constraints of client-side hardware and model size optimization remain a key limitation. While 20-billion parameter models are now feasible with quantization and WebGPU acceleration, they still represent a fraction of the capabilities of massive cloud-based models. The performance trade-offs, even with hardware acceleration, will vary significantly across devices, potentially leading to an inconsistent user experience. The article touches on this by recommending browser AI when privacy, latency, offline capability, or cost predictability matter enough to outweigh these constraints. Furthermore, the adoption of new APIs like WebNN is still in progress, meaning full hardware acceleration across all specialized NPUs might not be universally available immediately. The development and debugging of complex AI pipelines within the browser environment also present new challenges that the article acknowledges implicitly through its focus on robust evaluation methodologies. Despite these challenges, the momentum towards client-side AI, driven by these advancements, is undeniable and promises to reshape how we interact with intelligent applications.

Key Points

- Running AI workloads directly in the browser eliminates privacy concerns, network latency, and escalating cloud costs.

- Technologies like Transformers.js, WebLLM, and WebGPU enable practical AI inference locally.

- Hardware acceleration via WebGPU is widely supported, with WebNN promising future access to specialized NPUs.

- Practical use cases include local transcription (Whisper) and data analytics with in-browser DuckDB and LLMs.

- Design principles emphasize structured suggestions over chatbots and hiding model loading times.

- Model optimization through quantization is crucial for reducing size with minimal quality loss.

- Browser inference is ideal when privacy, latency, offline capability, or cost predictability outweigh hardware constraints.

📖 Source: QCon London 2026: Running AI at the Edge - Running Real Workloads Directly in the Browser

Related Articles

Comments (0)

No comments yet. Be the first to comment!