Sora 2: Safety First in AI Video Creation

Alps Wang

Mar 24, 2026 · 1 views

Sora 2: Guardrails for Generative Video

OpenAI's announcement of Sora 2 and its accompanying app, with a strong emphasis on safety, marks a crucial step in the responsible deployment of advanced generative AI. The integration of provenance signals, including visible and invisible watermarks and C2PA metadata, is a commendable effort to combat misinformation and establish content authenticity. The strict guardrails for image-to-video generation involving real people, particularly children, and the consent-based character likeness feature, demonstrate a proactive approach to user privacy and control. The tiered safety measures, including content filtering at creation and during feed scanning, alongside user recourse mechanisms like reporting and blocking, aim to create a more secure environment. This focus on safety is paramount as AI video generation moves from experimental to accessible tools.

However, several aspects warrant deeper consideration. While the article highlights robust safeguards, the effectiveness of these systems against sophisticated adversarial attacks remains a key concern. The 'red teaming' mentioned is positive, but the continuous evolution of AI capabilities means safety measures must also be perpetually refined. The consent mechanism for image-to-video generation, while necessary, relies heavily on user attestation, which could be a point of vulnerability if not rigorously enforced or monitored. Furthermore, the 'stricter safety guardrails' for children and young-looking persons are positive, but the definition and detection of 'young-looking' can be subjective and require advanced, continuously updated AI models. The article also touches upon audio safeguards, but the complexities of voice cloning and music imitation require ongoing innovation and robust legal frameworks. The success of these safety measures will ultimately depend on their implementation, continuous improvement, and independent auditing.

This development is beneficial for a wide range of users, from individual creators and artists looking to explore new forms of expression, to businesses seeking to produce marketing or educational content. Developers and researchers will find the technical details on provenance and filtering intriguing, potentially inspiring further work in AI safety and content authentication. For the broader public, the emphasis on safety and transparency is crucial for building trust in AI-generated media. The technical implications are significant, pushing the boundaries of AI model design to incorporate ethical considerations from the ground up. The comparison with existing solutions is implicitly about the advancement over Sora 1 and other generative models, where safety and control were perhaps less integrated or sophisticated. The challenge lies in balancing creative freedom with the imperative to prevent misuse, a tightrope walk that OpenAI appears to be navigating with deliberate caution in this release.

Key Points

- Sora 2 introduces robust safety features, including visible and invisible provenance signals and C2PA metadata for content authenticity.

- Image-to-video generation with real people has strict guardrails, requiring user consent and heightened moderation for depictions of children.

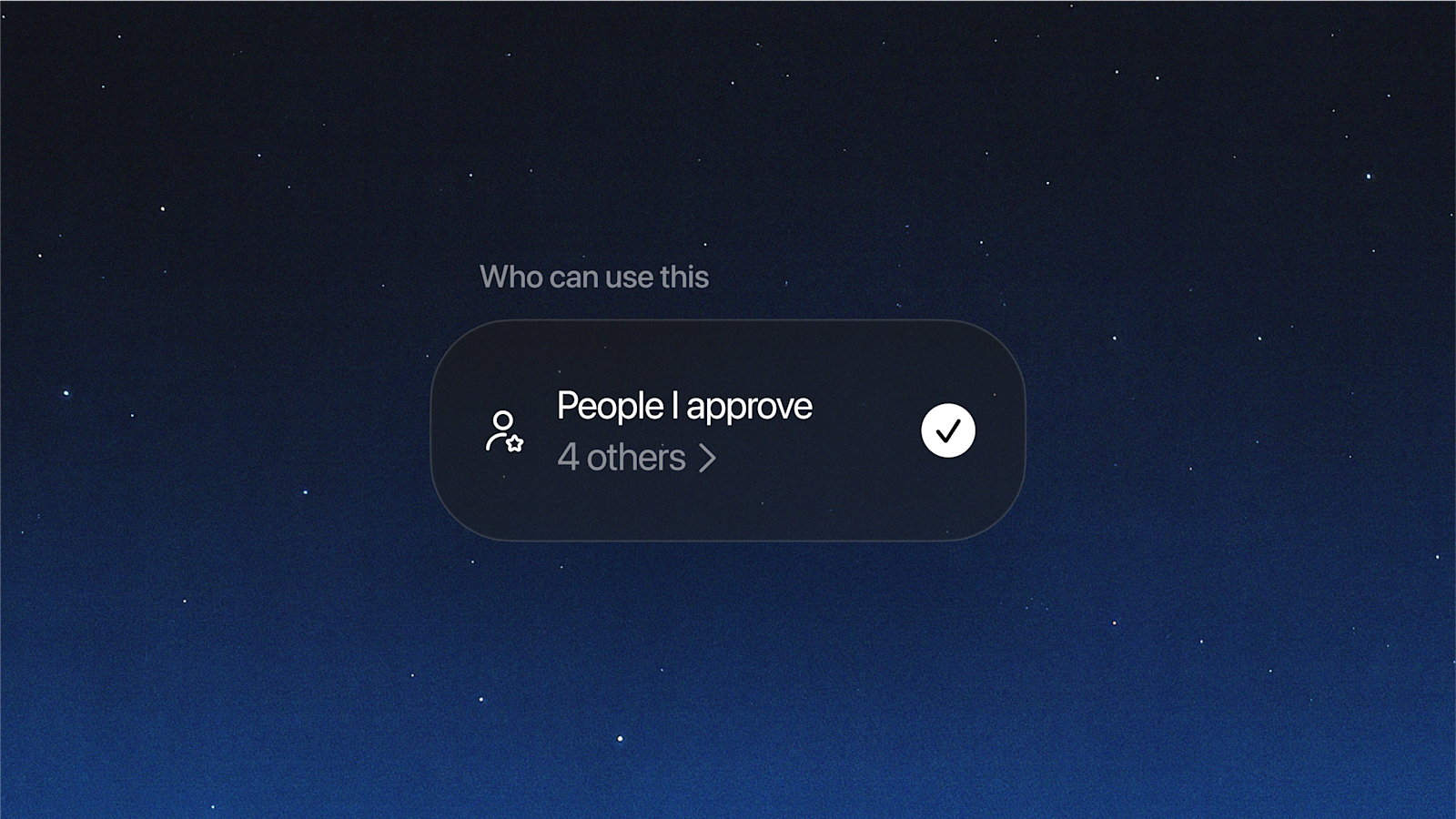

- Consent-based character likeness provides users with control over their appearance and voice, with revocation options.

- Teen users receive enhanced protections, including content filtering and limitations on adult interaction.

- Layered defenses at creation and feed scanning filter harmful content, with ongoing red teaming and human review.

- Audio safeguards include scanning speech transcripts and blocking imitation of living artists or existing works.

📖 Source: Creating with Sora Safely

Related Articles

Comments (0)

No comments yet. Be the first to comment!