Netflix Unlocks Video Search with Multimodal AI

Alps Wang

Apr 4, 2026 · 1 views

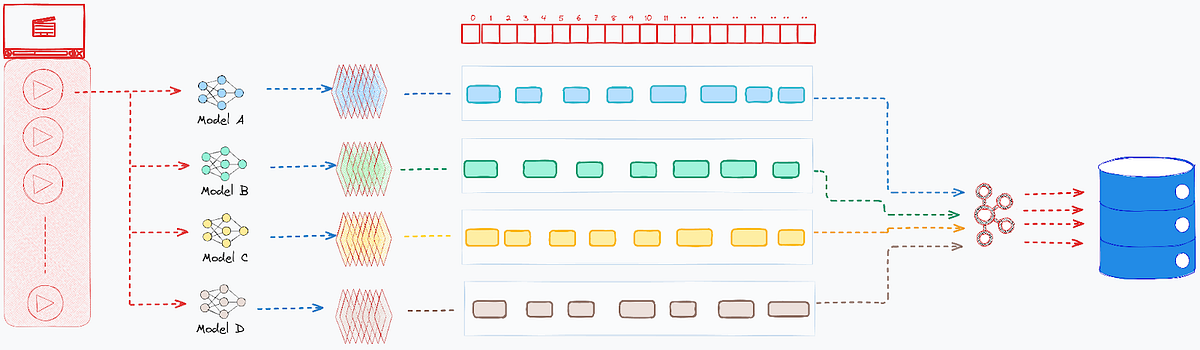

Orchestrating Senses for Video Insight

The Netflix Tech Blog post 'Powering Multimodal Intelligence for Video Search' offers a compelling deep dive into a complex technical challenge: unifying disparate AI model outputs into a cohesive, real-time search experience for video content. The key innovation lies in their meticulously designed three-stage pipeline: transactional persistence using Cassandra for robust ingestion, asynchronous offline data fusion via Kafka and temporal bucketing for heavy computation, and finally, indexing into Elasticsearch for real-time search. This architectural separation effectively addresses the core bottlenecks of scale, temporal synchronization, and data harmonization. The emphasis on zero-friction search, aiming for 'speed of thought' interaction, is particularly noteworthy, directly addressing a critical pain point for creative professionals. The detailed explanation of their indexing strategy, including nested documents in Elasticsearch and fine-grained search tuning (exact vs. approximate, dynamic similarity metrics, confidence thresholding), demonstrates a sophisticated understanding of high-dimensional data retrieval. Furthermore, their approach to textual analysis, incorporating phrase/proximity matching, N-gram analysis, stemming, and fuzzy matching, highlights a commitment to linguistic precision even within the visual medium. The 'Union' vs. 'Intersection' logic for result reconstruction is a thoughtful touch, catering to different search intents.

However, while the article excels in detailing how they achieved this, it could benefit from more explicit discussion on the why behind certain choices, beyond the immediate benefits for Netflix's editorial teams. For instance, the specific trade-offs made in choosing Cassandra over other distributed databases for transactional persistence, or the rationale for Elasticsearch as the final search index, could offer broader lessons. The 'future extensions' section hints at even more ambitious goals like natural language discovery and adaptive ranking, which are crucial for truly intuitive AI interfaces, but the current implementation, while impressive, still relies on structured queries. The potential for bias in the underlying AI models used for annotation, and how Netflix mitigates this to ensure fair and representative search results across diverse content, is an area that could be explored further. The sheer volume of data processed and the computational resources required also raise questions about the cost-effectiveness and environmental impact, which are increasingly important considerations in large-scale AI deployments. Despite these points, the article stands as a significant contribution, providing a blueprint for tackling multimodal data integration at an unprecedented scale.

Key Points

- The article details Netflix's innovative approach to multimodal video search, unifying outputs from specialized AI models.

- Key architectural components include a three-stage pipeline: transactional persistence (Cassandra), offline data fusion (Kafka), and real-time indexing (Elasticsearch).

- The system addresses challenges like temporal synchronization, massive data scale, and surfacing the best moments through techniques like temporal bucketing and hybrid scoring.

- Advanced search features include fine-grained control over similarity metrics, confidence thresholding, and sophisticated text analysis for linguistic precision.

- Future plans involve natural language discovery and adaptive ranking to further enhance user interaction and search relevance.

Related Articles

Comments (0)

No comments yet. Be the first to comment!