LinkedIn's CMA: AI Agents Get a Memory

Alps Wang

Apr 21, 2026 · 1 views

Beyond Stateless: LinkedIn's Memory-Driven Agents

LinkedIn's Cognitive Memory Agent (CMA) represents a crucial step forward in building production-grade AI agents by tackling the inherent statelessness of Large Language Models (LLMs). The introduction of a dedicated memory infrastructure layer, segmented into episodic, semantic, and procedural memory, is a sophisticated approach to enabling stateful, context-aware interactions. This architecture directly addresses the need for continuity, personalization, and reduced redundant reasoning, which are critical for applications like the Hiring Assistant. The emphasis on a shared memory substrate for multi-agent systems further highlights its potential to streamline complex workflows and improve coordination. The acknowledgement of operational challenges, such as cache invalidation and staleness management, demonstrates a realistic understanding of distributed systems complexities, making the solution more credible.

However, the success of CMA hinges on the efficacy of its retrieval and lifecycle management mechanisms, particularly relevance ranking and consistency of evolving user context. The article touches upon these as engineering challenges but doesn't delve deeply into their solutions. While human validation is mentioned for high-stakes scenarios, the scalability and integration of such human-in-the-loop processes within a fully automated agentic system remain a point of consideration. The article also implicitly assumes a robust data pipeline for populating and maintaining semantic and procedural memory, which itself can be a significant undertaking. Ultimately, CMA is a promising architectural pattern that moves agentic AI from nascent experimentation towards robust, scalable production systems, but its practical implementation and long-term maintenance will be key indicators of its true impact.

Key Points

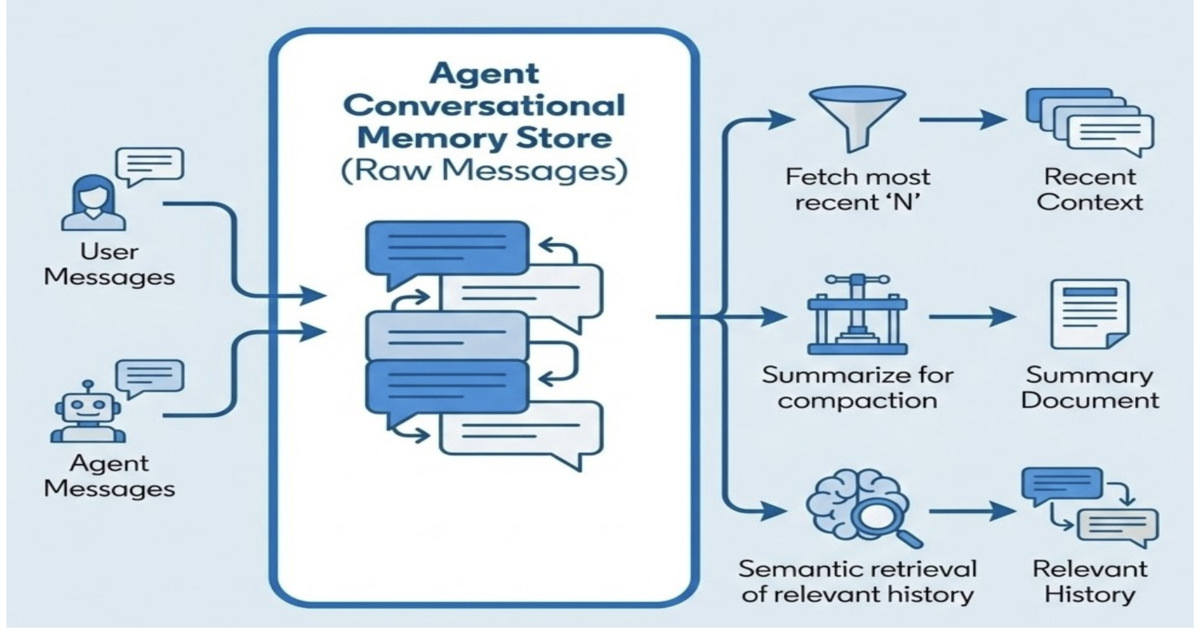

- LinkedIn's Cognitive Memory Agent (CMA) addresses the statelessness limitation of LLMs by providing a dedicated memory infrastructure layer.

- CMA organizes memory into three distinct layers: episodic (interaction history), semantic (structured knowledge), and procedural (workflows/patterns).

- It enables stateful, context-aware AI systems that retain and reuse knowledge across interactions, improving personalization and reducing redundant reasoning.

- CMA serves as a shared memory substrate in multi-agent systems, reducing state duplication and improving coordination.

- Key engineering challenges include relevance ranking, staleness management, and consistency of evolving user context.

- Human validation is incorporated for high-stakes applications to ensure alignment with user intent.

📖 Source: Designing Memory for AI Agents: Inside Linkedin’s Cognitive Memory Agent

Related Articles

Comments (0)

No comments yet. Be the first to comment!