DoorDash's AI Safety: Cutting Incidents by 50%

Alps Wang

Jan 24, 2026 · 1 views

AI Safety: The DoorDash Approach

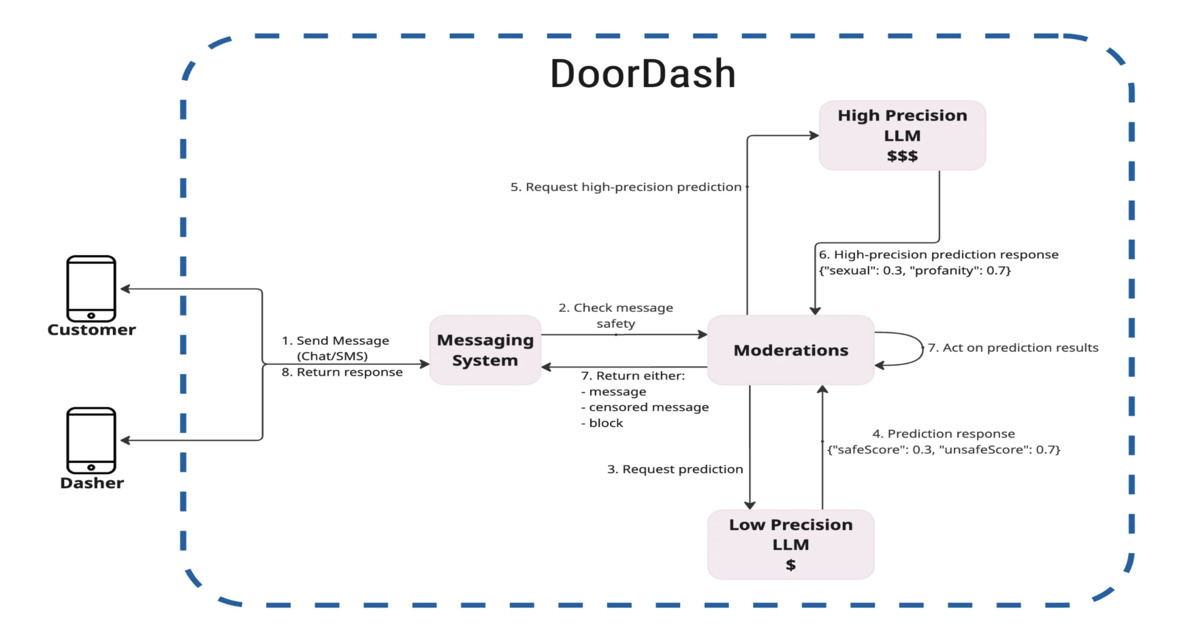

DoorDash's SafeChat implementation presents a compelling case study in applying AI to improve platform safety and user experience. The layered architecture, combining low-cost and high-precision models with human review, showcases a practical approach to real-time content moderation. The reported 50% reduction in safety incidents is a strong indicator of success. The use of internal models trained on initial data, improving scalability and reducing costs, is particularly noteworthy. However, the article lacks detailed information on the specific machine learning models employed, such as the type of LLMs used and the architecture of the internal models. Further, while the article mentions iterative human review for tuning thresholds, the process of human feedback collection and its integration into model training is not fully elaborated upon. A more in-depth exploration of these aspects would enhance the article's value for developers looking to replicate or adapt these techniques. The potential for bias in the training data and the LLMs themselves, and how DoorDash mitigates these biases, also warrants further investigation. The article’s focus on the architecture and outcomes, rather than the specific model choices, presents a slight limitation in terms of direct reproducibility.

Key Points

- DoorDash implemented SafeChat, an AI-driven safety system for in-app chat, images, and voice calls.

- The system uses a layered AI architecture combining moderation APIs, LLMs, and human review.

- SafeChat led to a 50% reduction in low and medium-severity safety incidents.

📖 Source: DoorDash Applies AI to Safety Across Chat and Calls, Cutting Incidents by 50%

Related Articles

Comments (0)

No comments yet. Be the first to comment!