Anthropic's Claude Code Goes Auto: Less Babysitting, More Coding

Alps Wang

May 6, 2026 · 1 views

Autonomous Coding: The Next Frontier

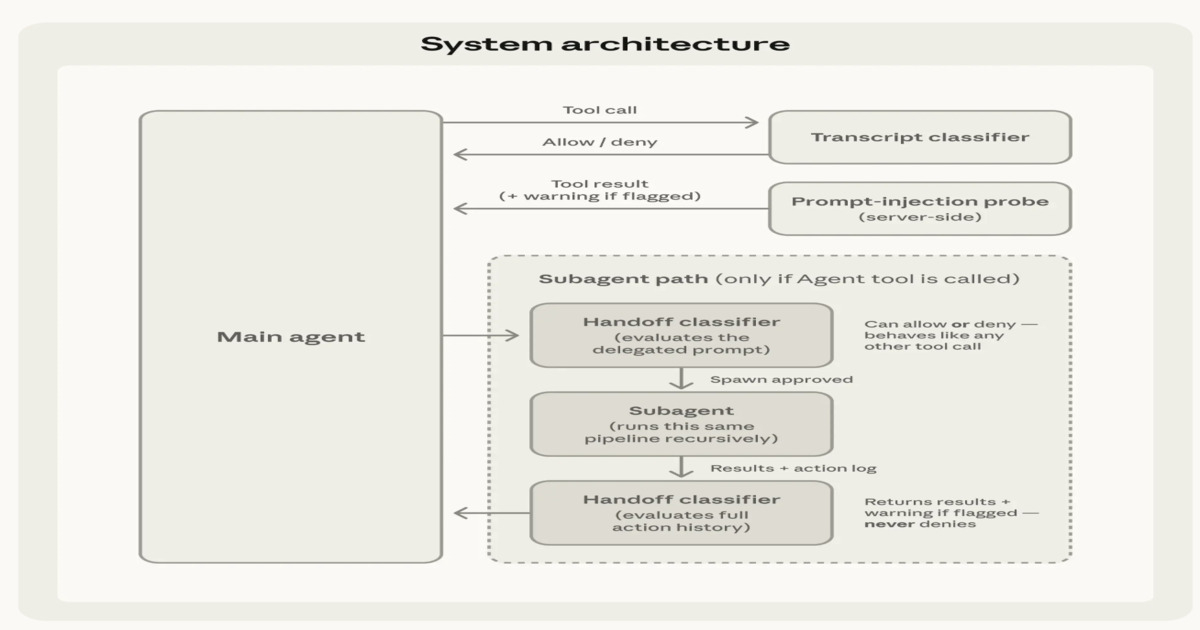

Anthropic's introduction of Auto Mode in Claude Code is a notable step towards more autonomous AI-powered software development. The layered safety and execution architecture, with its two-stage classification for filtering actions, is a crucial innovation. This design aims to strike a balance between reducing developer fatigue from constant approvals and maintaining robust safety mechanisms for sensitive operations. The explicit mention of inspecting tool outputs before incorporation into the system context, and injecting warnings for untrusted content, highlights a thoughtful approach to mitigating risks associated with AI execution. Furthermore, the extension of safety checks to subagent workflows, including outbound and return checks, demonstrates a comprehensive strategy for ensuring integrity in delegated tasks. This move could significantly boost developer productivity by allowing them to 'walk away' from repetitive oversight, as Sid Chaudhary aptly put it, enabling deeper focus on complex problem-solving and creative aspects of development.

However, the article, while informative, could benefit from a deeper dive into the specifics of the 'sensitive operations' requiring human approval. Understanding the criteria for escalation and the types of actions deemed high-risk would provide a clearer picture of the system's limitations and the residual risks users must be aware of. The 'two-stage classification' is promising, but the article leaves room for questions about the efficacy of the 'fast initial filter' and the depth of analysis for escalated operations. While the goal is to make autonomous operation safer than 'no guardrails,' the inherent complexity of software development means that emergent bugs or unintended consequences, even with these safeguards, remain a concern. The article hints at continuous improvement through expanded evaluation sets, which is positive, but the long-term maintainability and scalability of these safety mechanisms in the face of increasingly complex AI models and diverse coding environments will be critical. The onus remains on developers to stay aware of residual risks and report issues, a necessary but potentially burdensome aspect of adopting such advanced AI tools.

Key Points

- Anthropic has introduced 'Auto Mode' in Claude Code, enabling multi-step software development tasks with reduced manual intervention.

- The system handles code generation, execution, tool use, and iterative refinement, with human approval gates for sensitive operations.

- This addresses 'approval fatigue' by moving from a permission-based model to an automated approval mechanism for most actions.

- A layered safety and execution architecture inspects tool outputs and evaluates proposed actions before execution.

- A two-stage classification approach balances efficiency by fast-filtering safe actions and escalating uncertain or risky operations for deeper analysis.

- Safety checks are extended to subagent workflows with outbound and return validation to detect prompt injection or manipulation.

- The goal is to make autonomous operation safer than having no guardrails, while encouraging user awareness of residual risks.

📖 Source: Inside Claude Code Auto Mode: Anthropic’s Autonomous Coding System with Human Approval Gates

Related Articles

Comments (0)

No comments yet. Be the first to comment!